To install and use a new AIR SDK, you need to overlay it on an

existing Flex SDK. This is a bit more tricky on a Mac than on

Windows, because - as far as I know - you still cannot do a copy/overwrite

without deleting the contents of the folder you are overwriting.

The following steps illustrate how to install the AIR SDK using the terminal.

UPDATE April 4, 2012I haven't done it myself yet, but the steps below also seem to work for overlaying the AIR 3.2 SDK on top of the 4.6 SDK.

UPDATE October 5, 2011I wrote this post during the pre-release period of Flash Player 11 and AIR 3. Now that they are both officially released, I have changed the links to the official release url's, but I haven't updated all the screenshots!

1.

Close Flash Builder or Eclipse.

2.

Look up your current SDK. I was using 4.5.1. which on my macBook is located here:

/Applications/Adobe Flash Builder 4.5/sdks/3.

Copy the entire, current SDK folder and rename it, I named it

AIR3SDK.

4.

Download the AIR 3 SDK

here,

and save this file

inside your newly created

AIR3SDK folder.

5.

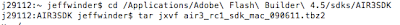

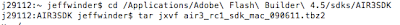

Open the terminal (Applications>Utilities>Terminal.app), and cd to the root of the

AIR3SDK folder.

You can also just type cd, add a white space and then drag the SDK folder in your terminal

username$ cd /Applications/Adobe\ Flash\ Builder\ 4.5/sdks/AIR3SDKand hit enter.

6.

Now that you're in the root of the new Flex SDK folder, type or copy/paste

tar jxvf AdobeAIRSDK.tbz2or use the name of the file you downloaded, when it's a different version,

and hit enter. This will unzip the tar file that you've downloaded, and automatically overwrite any duplicate files. Here's a (NOT UPDATED) screenshot of my terminal for step 5 and 6:

7.

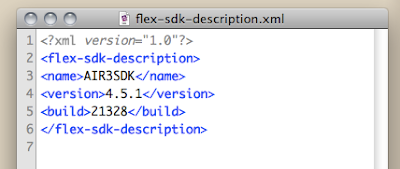

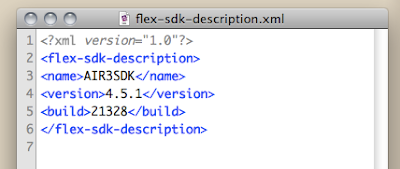

In the root of the

AIR3SDK folder, open

flex-sdk-description.xml and change the content of the

name node from

Flex 4.5.1 to

AIR3SDK.

8.

Still inside the

AIR3SDK folder, go to

/frameworks/libs/player/and create a folder called

11.0.

Now download

PlayerGlobal (.swc) here, save it in the 11.0 folder and rename it to

playerglobal.swc.

9.

Open Flash Builder or Eclipse and start a new project. In the opening popup, click on

Configure Flex SDK's, click on

Add, click

Browse to browse to the

AIR3SDK folder, and open it. Now you can set this SDK version as default SDK or select it in the dropdown list when you're back in the opening popup.

AIR 3 has some cool new features such as

Captive Runtime, which bundles the AIR runtime with your app, so that the user doesn't have to download it separately; something that you already could do with AIR to iOS, but is new for Android and desktop.

Another new feature is extending AIR with

Native Extensions, meaning that you can integrate native code from the target device into your app, of which one of the results can be a major performance boost.